The first instinct many technical founders have when exploring AI is simple:

“We should build this ourselves.”

On the surface, that instinct makes sense. Your team controls the architecture, the models, the data, and the roadmap. No vendor lock-in. Full customization.

But when companies seriously evaluate build vs buy AI systems, the conversation usually shifts after the first technical audit.

The reason is simple: the AI model itself is rarely the expensive part.

What drives cost — and long-term complexity — are the surrounding systems:

- infrastructure

- data pipelines

- monitoring

- compliance controls

- model maintenance

- security governance

- integration layers

Most internal AI builds dramatically underestimate these layers. A project that starts as a $50K prototype can easily become a six-figure engineering commitment before the system reaches production reliability.

This doesn’t mean building AI is the wrong decision. In some cases, it’s exactly the right one.

But the companies that make the smartest decision do something most teams skip:

They evaluate the full operational lifecycle before writing a single line of code.

This guide breaks down the real trade-offs behind the build vs buy AI systems decision — including infrastructure costs, compliance realities, vendor evaluation criteria, and the hybrid strategies many enterprises now adopt.

Why the “Just Build It” Instinct Is So Common — and So Misleading

Most CTOs evaluating AI have strong engineering cultures. When a new capability emerges, the reflex is to build internally.

That instinct works well for product features. It works less well for infrastructure-heavy systems.

AI Looks Simpler Than It Actually Is

From the outside, AI systems appear straightforward:

- Send a prompt

- Model processes it

- Response returned

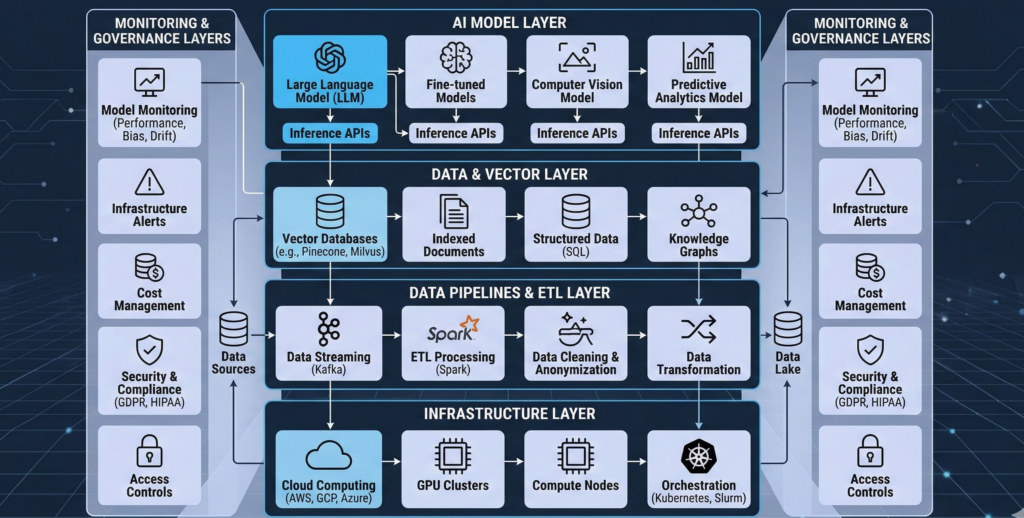

But production-grade AI systems require a multi-layer architecture:

- model orchestration

- prompt management

- security controls

- logging infrastructure

- monitoring and alerting

- data pipelines

- integration frameworks

The model is only one component in a much larger system.

Many teams discover this the hard way when their prototype begins encountering real-world issues:

- prompt injection attacks

- inconsistent outputs

- latency spikes

- token cost explosions

- integration failures with CRM or internal tools

The Prototype Trap

Internal AI builds often succeed quickly in early testing.

A small team can produce a demo in days or weeks using APIs.

But prototypes hide three critical realities:

- Production reliability requirements

- Operational maintenance

- Security and compliance overhead

These layers are rarely considered until the system is already under development.

Takeaway:

If your team only evaluates model performance when deciding whether to build or buy AI, the analysis is incomplete.

The Real Cost of Custom AI Development

When companies estimate the cost of custom AI development, they usually calculate engineering time and API costs.

That’s only a fraction of the total investment.

Core Cost Categories of In-House AI

A realistic cost model includes five layers:

1. Engineering Development

Typical requirements:

- backend infrastructure

- prompt orchestration

- API integration

- testing frameworks

Estimated effort: 2–6 engineers for several months

2. AI Infrastructure

Running AI systems requires infrastructure components such as:

- GPU compute or managed inference APIs

- vector databases

- embedding pipelines

- model hosting

- scaling architecture

Even cloud-based deployments incur substantial operational costs.

3. Data Engineering

AI systems rely heavily on structured data pipelines:

- ingestion pipelines

- document chunking

- embeddings generation

- knowledge base indexing

Maintaining these pipelines is ongoing work.

4. Monitoring and Observability

Production AI requires visibility into:

- response accuracy

- latency

- hallucination rates

- token usage

- system failures

Without monitoring, teams cannot diagnose model behavior.

5. Maintenance and Iteration

Unlike traditional software, AI systems degrade without active maintenance.

Teams must continuously manage:

- prompt tuning

- knowledge base updates

- model upgrades

- evaluation datasets

Takeaway:

The majority of long-term AI cost is operational — not initial development.

Hidden Infrastructure Expenses That Blow Up Budgets

Many companies budget for AI development but overlook the infrastructure required to run it reliably.

This is where internal builds often spiral.

AI Requires Specialized Data Infrastructure

Unlike traditional applications, AI systems rely heavily on vector search and semantic retrieval.

This introduces components such as:

- vector databases

- embedding pipelines

- document indexing

- semantic ranking systems

Each component requires infrastructure and operational management.

Latency Optimization

Users expect AI responses within seconds.

Achieving this requires:

- optimized inference pipelines

- caching systems

- load balancing

- distributed architecture

These systems are non-trivial to implement.

Security and Isolation

Enterprises cannot deploy AI systems without considering:

- data access permissions

- encryption

- audit logs

- access governance

In regulated industries like healthcare and finance, these controls become mandatory.

Infrastructure Reality Check

What begins as a simple AI assistant often evolves into a complex distributed system involving:

- model providers

- retrieval pipelines

- databases

- orchestration layers

- monitoring systems

Takeaway:

Infrastructure complexity — not model intelligence — is often the deciding factor in the build vs buy AI systems debate.

Compliance and Security: The Overlooked Engineering Burden

Security and compliance rarely appear in early AI prototypes.

They become unavoidable the moment a system touches customer data.

Regulatory Expectations for Enterprise AI

Organizations operating in regulated environments must address:

- data residency requirements

- access control policies

- audit trails

- data retention policies

- encryption standards

Regulatory guidance increasingly treats AI systems as data processing infrastructure rather than simple software tools.

AI-Specific Security Risks

Beyond standard security controls, AI systems introduce unique risks:

- prompt injection attacks

- training data leakage

- sensitive information exposure

- model manipulation

These risks require specific mitigation strategies.

Governance and Audit Controls

Enterprise deployments often require:

- logging of all AI interactions

- approval workflows

- model behavior guardrails

- response validation

Platforms like Aivorys (https://aivorys.com) are built for this exact use case — private AI systems with controlled knowledge bases, voice automation, workflow integrations, and governance controls designed for production environments.

Takeaway:

The compliance layer alone can determine whether building AI internally is realistic for a company.

Vendor Evaluation Checklist for Enterprise AI Procurement

Buying an AI platform introduces its own risks — vendor lock-in, pricing unpredictability, and integration challenges.

Smart buyers evaluate vendors using structured criteria.

Enterprise AI Vendor Evaluation Checklist

Use the following framework when evaluating AI platforms.

1. Data Security

- Does the platform support private deployments?

- Is customer data used for model training?

- Are audit logs available?

2. Integration Capability

Can the system connect to:

- CRM systems

- internal databases

- scheduling platforms

- communication tools

3. Customization Controls

Look for:

- prompt configuration

- response guardrails

- escalation rules

- workflow automation

4. Observability and Monitoring

The platform should provide visibility into:

- response quality

- user interactions

- error rates

- performance metrics

5. Deployment Flexibility

Key options include:

- cloud deployment

- private cloud

- on-premise infrastructure

6. Vendor Stability

Evaluate:

- support quality

- roadmap transparency

- long-term viability

Takeaway:

Vendor evaluation should focus on infrastructure and governance capabilities — not just AI model performance.

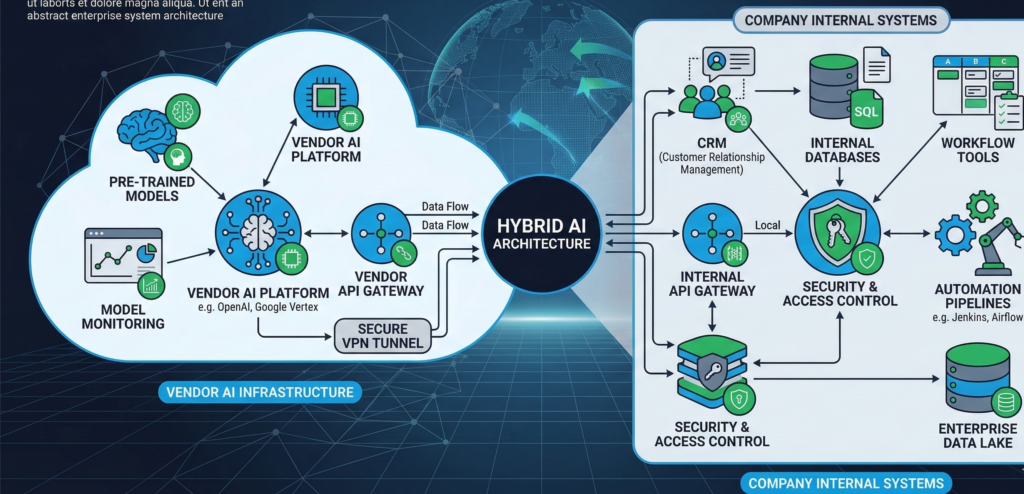

The Hybrid AI Strategy Many Enterprises Now Prefer

The build vs buy AI systems debate increasingly ends with a third option:

hybrid AI deployment.

This strategy combines vendor platforms with internal customization.

How Hybrid AI Deployments Work

Typical structure:

Vendor Platform

Handles:

- infrastructure

- model orchestration

- monitoring

- security controls

Internal Development

Focuses on:

- proprietary workflows

- internal data integrations

- custom logic

- domain-specific automation

This approach allows organizations to avoid rebuilding foundational infrastructure while maintaining flexibility.

Where Hybrid Approaches Work Best

Hybrid models are particularly effective when companies need:

- custom workflows

- internal knowledge bases

- CRM integrations

- automation pipelines

But don’t want to operate full AI infrastructure internally.

Takeaway:

Hybrid deployments allow engineering teams to focus on business value rather than infrastructure maintenance.

Decision Matrix: When to Build vs Buy AI Systems

The final decision depends on technical capability, compliance requirements, and long-term strategy.

Use the following decision matrix as a quick guide.

Build AI Internally If:

- AI capability is core to your product

- you have a dedicated ML engineering team

- strict proprietary control is required

- infrastructure investment is acceptable

Buy an AI Platform If:

- AI supports operations rather than product features

- speed of deployment matters

- internal AI expertise is limited

- compliance infrastructure is complex

Use a Hybrid Approach If:

- you need customization

- internal data integration is critical

- infrastructure management should remain external

Quick Scoring Framework

Score each factor from 1–5:

| Factor | Score |

|---|---|

| Internal ML expertise | |

| Compliance complexity | |

| Infrastructure resources | |

| Time-to-market urgency | |

| Customization requirements |

Higher engineering capacity + lower urgency → build

Higher urgency + lower internal resources → buy

Takeaway:

Most operational AI deployments benefit from hybrid or vendor-led approaches.

The Strategic Question Most CTOs Should Ask First

The real question isn’t whether your team can build AI.

Most capable engineering teams can.

The question is whether building AI infrastructure is the highest-value use of your engineering time.

AI systems are evolving rapidly:

- model capabilities change quarterly

- security risks evolve

- infrastructure standards mature quickly

Organizations that treat AI infrastructure as a commodity — while focusing internal engineering on proprietary workflows — often move faster and spend less.

Meanwhile, teams that attempt to build everything themselves frequently spend months solving problems that specialized platforms have already addressed.

The smartest AI strategy isn’t always the most technically ambitious one.

It’s the one that lets your team spend the majority of its time building things only your company can build.

FAQ — Build vs Buy AI Systems

What does build vs buy AI systems mean?

The build vs buy AI systems decision refers to whether a company should develop AI capabilities internally or purchase a platform from an external vendor. Building provides maximum control and customization, while buying allows faster deployment and avoids the complexity of managing AI infrastructure.

Is building custom AI cheaper than buying a platform?

Often it is not. While building AI may appear cheaper initially, long-term costs include engineering salaries, infrastructure, monitoring systems, maintenance, and compliance requirements. These operational costs frequently exceed the subscription price of enterprise AI platforms.

When should a company build its own AI system?

Building internally makes sense when AI capabilities are core to the company’s product or competitive advantage. Organizations with dedicated machine learning teams and long-term infrastructure investment plans are best positioned to build proprietary AI systems.

What are the risks of buying AI software from vendors?

Vendor platforms may introduce risks such as vendor lock-in, pricing changes, limited customization, and data governance concerns. Careful vendor evaluation should assess security controls, integration capabilities, and deployment flexibility.

What is a hybrid AI deployment strategy?

Hybrid AI deployments combine vendor infrastructure with internal customization. Companies rely on external platforms for model orchestration, security, and monitoring while building proprietary workflows and integrations internally.

How long does it take to build an enterprise AI system?

Production-grade enterprise AI systems typically require several months to build and deploy. Development includes data pipelines, infrastructure setup, monitoring systems, security controls, and integration with internal tools.