Voice AI has moved rapidly from novelty to operational infrastructure.

Enterprises now rely on conversational systems to handle customer calls, intake requests, qualify leads, schedule appointments, and automate service workflows. Every one of those interactions contains sensitive information: personal data, financial details, healthcare questions, contract discussions, internal operations.

For IT security leaders, that raises a critical question.

Where does all that conversation data actually go?

Many voice AI deployments prioritize natural-sounding speech and automation features while overlooking the deeper architecture required to protect the conversations themselves. If voice pipelines are poorly secured, sensitive information can leak through logging systems, model training pipelines, analytics platforms, or shared infrastructure.

Secure voice AI systems solve this problem by treating conversations as protected data flows rather than simple audio streams.

This means applying the same rigor used for enterprise infrastructure:

- Encrypted communication pipelines

- Isolated AI model environments

- Strict access governance

- Controlled data retention policies

- Compliance alignment with industry regulations

Understanding these mechanisms is essential before deploying conversational AI at scale. Security is not a feature layered on top of voice automation. It is the architectural foundation that determines whether the system is safe to use at all.

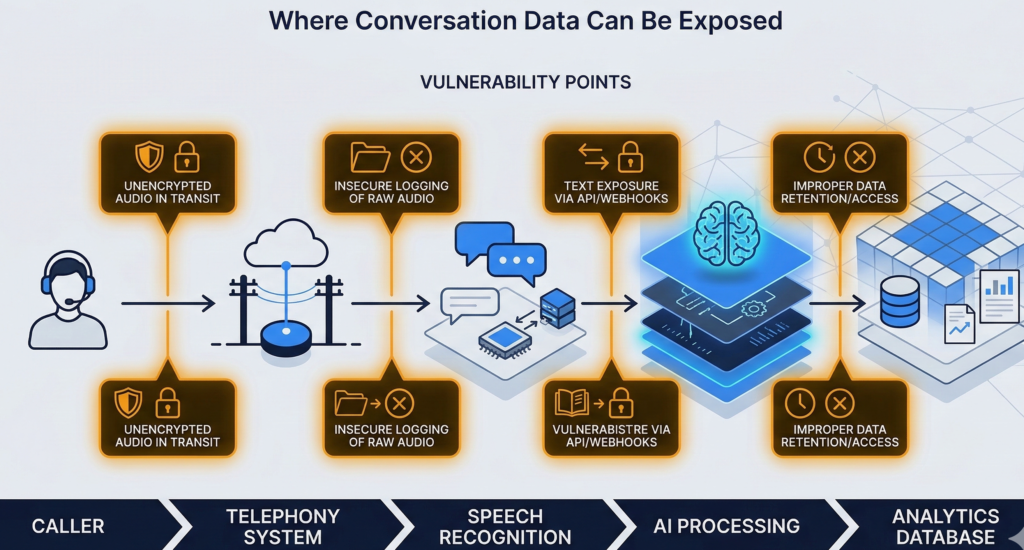

Where Voice AI Data Is Most Vulnerable

Most enterprise security reviews start with the obvious risk: recorded phone calls.

That’s only part of the picture.

Voice AI systems process conversation data through multiple stages, and each stage introduces a different potential exposure point.

1. Audio Transmission

The first vulnerability appears the moment a call begins.

Audio streams travel between telephony infrastructure and AI processing systems. If these pipelines are not encrypted end-to-end, attackers could theoretically intercept raw voice data.

This is especially concerning for industries handling:

- financial information

- medical discussions

- identity verification data

2. Speech Transcription Pipelines

Voice AI systems convert audio into text so natural language models can analyze it.

If transcription services run on shared infrastructure or public APIs, conversation data may pass through external processing environments.

This is where many deployments unknowingly introduce data exposure.

3. Conversation Logging and Analytics

Operational analytics often store transcripts for later analysis.

Without strict controls, these logs can contain:

- customer contact information

- billing details

- medical context

- internal operational notes

If logging systems lack role-based access or encryption, they become attractive targets for attackers.

4. Model Training Pipelines

Some conversational AI systems improve by training on collected conversations.

While this can enhance performance, it also raises major governance questions:

- Is customer data reused for training?

- Who controls the model dataset?

- Can proprietary business data leak into shared models?

Practitioner insight: many organizations focus on the voice interface while ignoring the downstream systems processing the conversation.

Security takeaway: A secure deployment must protect every stage of the voice data lifecycle, not just the call itself.

Encryption Standards Behind Encrypted AI Calls

Encryption forms the backbone of secure voice AI systems.

Without strong cryptographic protections, voice pipelines become vulnerable to interception or unauthorized access.

Transport Encryption

During a call, voice data moves between several components:

- telephony providers

- voice processing systems

- transcription engines

- conversational AI models

Transport encryption protects these connections.

Most enterprise deployments rely on:

- TLS (Transport Layer Security) for data-in-transit encryption

- SRTP (Secure Real-Time Transport Protocol) for voice streams

These protocols ensure that intercepted data remains unreadable without encryption keys.

Storage Encryption

Once conversations are processed, they may be stored for operational analysis or compliance purposes.

Secure systems encrypt stored data using standards such as:

- AES-256 encryption for databases

- encrypted object storage for call recordings

- encrypted transcript repositories

Encryption keys should be managed through dedicated key management systems rather than embedded within application code.

Key Management and Access Controls

Encryption alone is not sufficient.

Organizations must control who can decrypt conversation data.

Secure architectures typically enforce:

- role-based access controls

- security audit logs

- multi-factor authentication for administrators

- strict key rotation policies

Security takeaway: encrypted AI calls require both cryptography and disciplined key governance. One without the other leaves gaps.

Data Storage and Retention Policies for Voice AI

Even with encryption, storing conversations indefinitely creates risk.

Enterprise security policies usually define strict rules governing how long customer communications can be retained.

Voice AI deployments should follow similar practices.

Why Retention Policies Matter

Recorded calls and transcripts accumulate rapidly.

A company processing thousands of daily calls can generate millions of lines of conversational data each month.

If this information remains indefinitely accessible, it increases exposure in the event of a breach.

Common Enterprise Retention Models

Security teams typically implement one of three models.

Short-term operational retention

Transcripts stored temporarily for operational analytics.

Typical window: 7 to 30 days

Compliance retention

Certain industries must preserve communication records for regulatory purposes.

Examples include finance or insurance.

Selective archival

Sensitive conversations may be deleted automatically while operational metrics are retained in aggregated form.

Governance Controls

Retention policies should be enforced through automated controls rather than manual processes.

Key safeguards include:

- automatic transcript deletion policies

- restricted access to historical recordings

- anonymization of conversation analytics data

Security takeaway: Retention policies limit the blast radius of a potential breach by reducing the volume of stored conversational data.

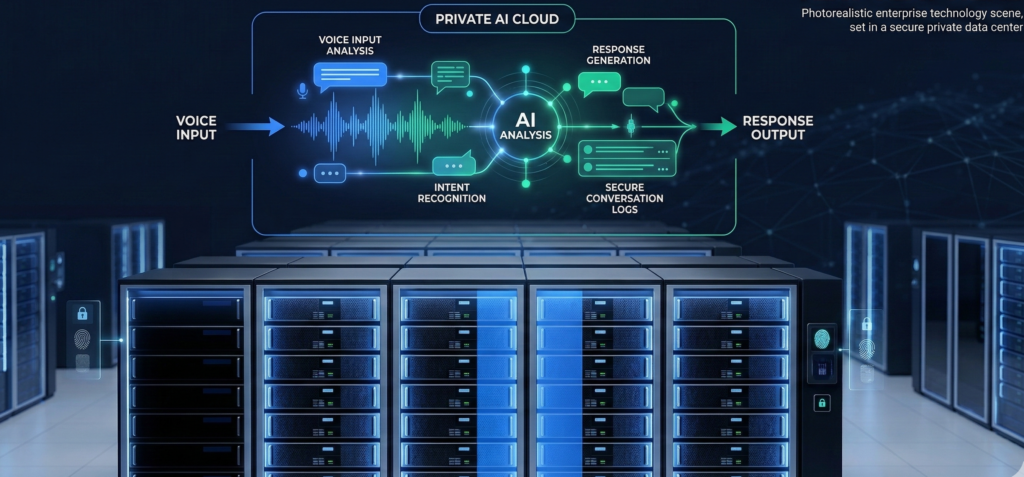

Private Hosting vs Shared AI Infrastructure

Infrastructure architecture has a major impact on voice AI security.

Many early conversational systems relied heavily on shared cloud infrastructure and external APIs.

While convenient, this architecture can create exposure for sensitive enterprise data.

Shared AI Infrastructure

In shared environments:

- AI models run on multi-tenant systems

- customer conversations may pass through third-party APIs

- logging systems may exist outside the organization’s control

These setups can be acceptable for low-risk use cases but may violate compliance requirements in regulated industries.

Private AI Infrastructure

Private deployments isolate conversational systems within controlled environments.

Options include:

- private cloud infrastructure

- dedicated AI model hosting

- on-premise deployment for sensitive environments

This architecture ensures that:

- conversation data never leaves approved infrastructure

- proprietary company knowledge bases remain isolated

- governance policies can be enforced consistently

Platforms like Aivorys (https://aivorys.com) are designed around this model, allowing organizations to deploy private voice AI with controlled data handling, workflow automation, and internal integrations while maintaining strict governance over conversational data.

Security takeaway: Infrastructure isolation is one of the strongest safeguards against data leakage in conversational AI systems.

Compliance Requirements for AI Call Infrastructure

Organizations operating in regulated sectors must ensure voice AI deployments align with industry compliance frameworks.

While requirements vary, most share similar principles around data protection and access control.

Healthcare

Healthcare systems handling patient communications must align with HIPAA safeguards.

Key considerations include:

- encrypted transmission of voice data

- access logging for transcripts

- restricted staff access to patient conversations

Financial Services

Financial institutions must often meet regulatory expectations related to customer data protection and recordkeeping.

This may include:

- secure storage of communications

- audit trails for access to call records

- tamper-resistant logging

Legal and Professional Services

Professional services organizations must protect confidential client information.

Voice AI deployments should enforce strict confidentiality controls and data governance policies.

Practitioner insight: Compliance requirements often focus on governance and auditability rather than specific technologies.

Security takeaway: voice AI deployments must map technical controls directly to the regulatory frameworks governing customer communications.

Security Evaluation Checklist for Secure Voice AI Systems

Security leaders evaluating conversational platforms should apply a structured assessment rather than relying on vendor claims.

The Enterprise Voice AI Security Checklist

Score each category from 1 (weak) to 5 (strong).

| Category | Key Evaluation Questions |

|---|---|

| Encryption Standards | Are voice streams encrypted using SRTP and TLS? |

| Infrastructure Isolation | Are models hosted privately or on shared infrastructure? |

| Access Governance | Are role-based permissions and audit logs enforced? |

| Data Retention Controls | Can transcripts be automatically deleted or anonymized? |

| Integration Security | Are CRM and workflow integrations encrypted and authenticated? |

| Compliance Alignment | Can the platform map controls to regulatory frameworks? |

Score interpretation

- 24–30: Enterprise-grade security posture

- 16–23: Moderate protection, may require additional controls

- Below 16: Significant security gaps

[INTERNAL LINK: Private AI vs Public AI Systems]

Security takeaway: Treat voice AI evaluation like any other critical infrastructure review. Architecture matters more than interface features.

Why Secure Voice AI Systems Are Becoming a Security Priority

Conversational AI systems are no longer experimental tools.

They are becoming embedded in core business workflows:

- sales conversations

- customer support interactions

- intake and onboarding processes

- appointment scheduling

- service coordination

That means they increasingly handle sensitive operational and customer data.

Without proper safeguards, voice AI can introduce new attack surfaces through transcripts, APIs, analytics pipelines, and model training systems.

Secure voice AI systems address this challenge by treating conversational data as protected enterprise information. Encryption protects the transport layer. Infrastructure isolation protects the processing environment. Governance controls protect access and retention.

For IT security leaders, the real shift is conceptual.

Voice AI is not just a communication interface.

It is a data processing pipeline handling some of the most sensitive information organizations collect.

Architecting that pipeline securely from the beginning determines whether conversational automation becomes an operational asset or a security liability.

If your organization is evaluating conversational infrastructure, a security architecture review or technical consultation can clarify which deployment models align with your governance requirements.

[INTERNAL LINK: Enterprise Voice AI Architecture]

FAQ — Secure Voice AI Systems

What are secure voice AI systems?

Secure voice AI systems are conversational platforms designed to protect customer conversations through encrypted audio pipelines, isolated AI infrastructure, strict access governance, and controlled data retention policies. These safeguards prevent sensitive information from leaking through transcription pipelines, analytics systems, or shared AI environments.

Are AI phone calls encrypted?

Yes, enterprise voice AI deployments typically encrypt calls using SRTP for voice streams and TLS for data transmission. These protocols ensure that audio and transcripts cannot be intercepted or read by unauthorized parties during processing or transmission.

Can voice AI systems comply with industry regulations?

Voice AI systems can meet regulatory requirements when deployed with appropriate governance controls. This includes encryption, audit logs, access restrictions, and secure storage policies that align with frameworks used in industries such as healthcare, finance, and legal services.

Is customer data used to train voice AI models?

It depends on the architecture. Some public conversational systems use collected interactions to improve models. Secure enterprise deployments often isolate training pipelines and prevent proprietary conversation data from being incorporated into shared models.

What industries require secure voice AI infrastructure?

Industries handling sensitive customer information typically require strict safeguards. These include healthcare providers, financial institutions, law firms, insurance companies, and enterprise service organizations managing confidential client interactions.

How do companies evaluate voice AI security?

Security teams usually assess encryption standards, infrastructure isolation, governance controls, data retention policies, and compliance alignment. Structured evaluation frameworks help determine whether a platform meets enterprise security requirements.

Conclusion

Voice AI is quickly becoming part of the enterprise communication backbone.

Calls that once passed through simple phone systems are now processed by intelligent conversational infrastructure capable of understanding requests, triggering workflows, and capturing operational data.

That capability comes with responsibility.

Every automated conversation contains fragments of sensitive information: personal details, financial questions, healthcare discussions, operational decisions. Without strong architectural safeguards, that information can travel far beyond the call itself.

Secure voice AI systems address this challenge by embedding protection directly into the infrastructure. Encryption secures the voice pipeline. Private hosting isolates AI processing. Governance controls restrict access. Retention policies limit exposure.

When these elements work together, conversational automation becomes not only efficient but trustworthy.

For organizations evaluating voice AI adoption, the real question is not whether the system sounds natural. It is whether the architecture protects the conversations happening inside it.