An AI system denies a loan application incorrectly.

A chatbot provides inaccurate medical intake advice.

An automated sales assistant sends misleading information to a customer.

None of these failures require malicious intent. They can emerge from training data bias, automation logic errors, prompt manipulation, or system integration issues.

The real problem is what happens next.

Who is responsible when AI makes a mistake?

The vendor that built the system?

The company that deployed it?

The employee who relied on the output?

This question sits at the center of a rapidly evolving legal issue: AI liability for businesses.

As organizations deploy AI across customer service, sales, operations, and decision support, legal frameworks are still catching up. Regulators are issuing guidance, but clear global standards remain uneven.

That uncertainty creates a new category of operational risk.

AI can automate decisions at scale — but without governance structures, accountability becomes unclear when those decisions cause harm.

For CEOs and legal leaders evaluating AI adoption, the real challenge is not whether AI can create value. It’s whether the organization can deploy it responsibly enough to withstand regulatory scrutiny and legal disputes.

This article examines how AI liability works in practice, how responsibility is typically distributed across vendors and enterprises, and what governance frameworks businesses need to reduce legal exposure.

What Is AI Liability for Businesses?

AI liability for businesses refers to the legal responsibility organizations may face when an artificial intelligence system causes harm, makes incorrect decisions, or violates regulations during business operations.

Liability may arise in several contexts:

- Financial decisions (loans, pricing, credit approval)

- Healthcare intake or triage automation

- Customer service responses

- Hiring and recruitment decisions

- Contract interpretation

- Marketing claims generated by AI

When an AI system produces an outcome that leads to harm, regulators and courts typically evaluate three questions:

- Was the system used responsibly?

- Were risks reasonably foreseeable?

- Did the organization implement appropriate safeguards?

AI does not eliminate accountability.

In most legal interpretations, organizations remain responsible for the tools they deploy, even when those tools rely on external vendors or automated models.

Why AI Liability Is Increasingly Relevant

Three trends are accelerating legal scrutiny:

1. Automation of business decisions

AI increasingly participates in operational choices previously made by humans.

2. Scale of impact

Automated systems can affect thousands of customers simultaneously.

3. Regulatory momentum

Governments worldwide are developing frameworks addressing AI accountability and transparency.

Micro-insight:

AI mistakes are rarely technical failures alone — they become legal issues when governance is absent.

Actionable takeaway:

Before deploying AI in customer-facing or decision-support roles, organizations must define who is accountable for monitoring outputs and intervening when errors occur.

AI Decision Accountability: Who Owns the Outcome?

A common misconception is that AI decisions are autonomous.

In reality, AI systems operate within organizational control structures.

That means responsibility ultimately maps back to the organization deploying the technology.

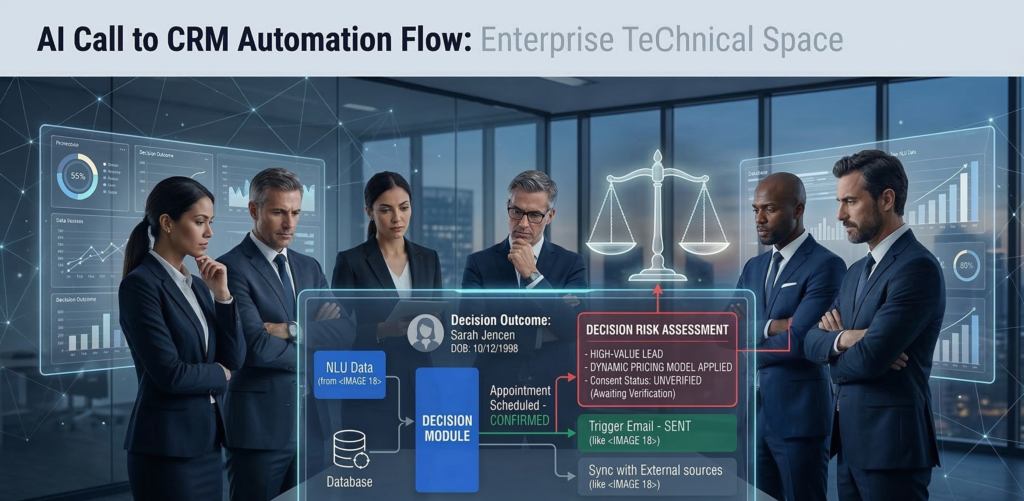

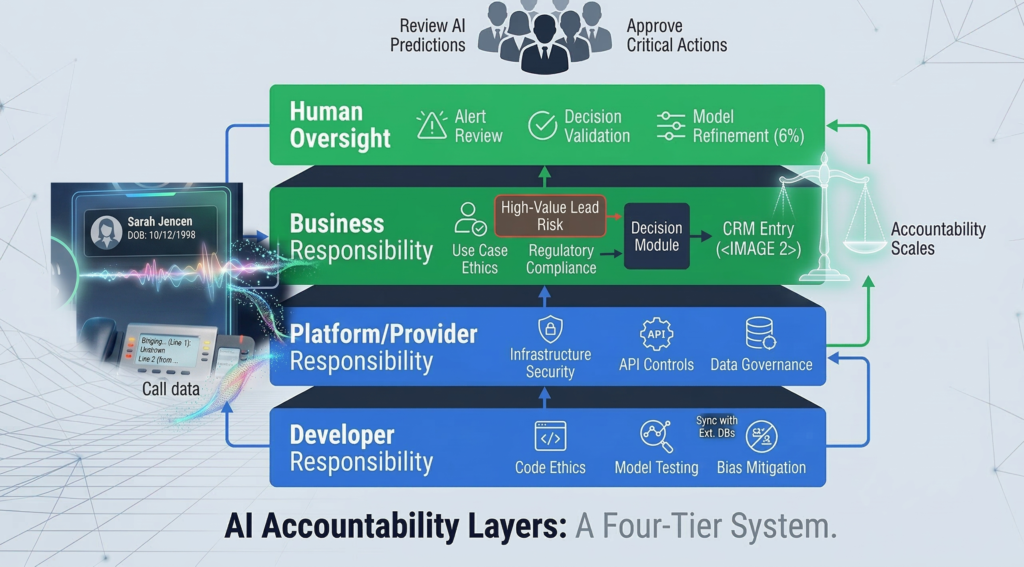

The Four Layers of AI Accountability

Understanding liability requires examining four operational layers.

1. Model Developer

The organization that builds the underlying AI model.

Responsibilities may include:

- training data integrity

- model performance standards

- documentation of limitations

However, most developers disclaim liability for how the model is used downstream.

2. AI Platform Provider

The company offering the infrastructure or system integrating the model.

Responsibilities may include:

- system security

- operational reliability

- audit logging

- configuration controls

3. Deploying Organization

The business implementing the AI system within its workflows.

Responsibilities typically include:

- defining use cases

- setting guardrails

- monitoring outputs

- ensuring regulatory compliance

This layer carries the majority of legal accountability.

4. Human Oversight

Employees responsible for reviewing or acting on AI outputs.

Courts and regulators increasingly expect human oversight in high-risk AI applications.

Micro-insight:

AI does not replace responsibility — it redistributes it across system layers.

Actionable takeaway:

Every AI deployment should include a documented accountability chain identifying who owns monitoring, escalation, and corrective action.

Vendor vs. Enterprise Liability: Where Responsibility Actually Falls

When AI errors occur, organizations often assume the vendor bears the risk.

In practice, liability usually shifts toward the deploying business.

Why Vendors Limit Legal Responsibility

Most AI platform agreements include clauses that:

- limit vendor liability

- restrict damages

- assign responsibility for configuration and usage

This reflects a fundamental legal principle:

Vendors provide tools — businesses control how those tools are used.

Example Scenario

Consider a sales automation AI that sends inaccurate pricing information.

Possible liability paths:

| Scenario | Responsible Party |

|---|---|

| Vendor algorithm malfunction | Vendor partially responsible |

| Business configured incorrect pricing rules | Business responsible |

| AI misinterprets prompt instructions | Business responsible |

| Employee ignores warning signals | Business responsible |

Most disputes ultimately hinge on whether the deploying company implemented reasonable safeguards.

What Courts Typically Evaluate

Legal analysis usually focuses on:

- documentation of intended use

- safeguards against misuse

- human review processes

- monitoring mechanisms

Micro-insight:

The more autonomy you give AI systems, the more governance you must demonstrate.

Actionable takeaway:

Legal teams should review AI vendor contracts carefully to understand liability limits and operational responsibilities.

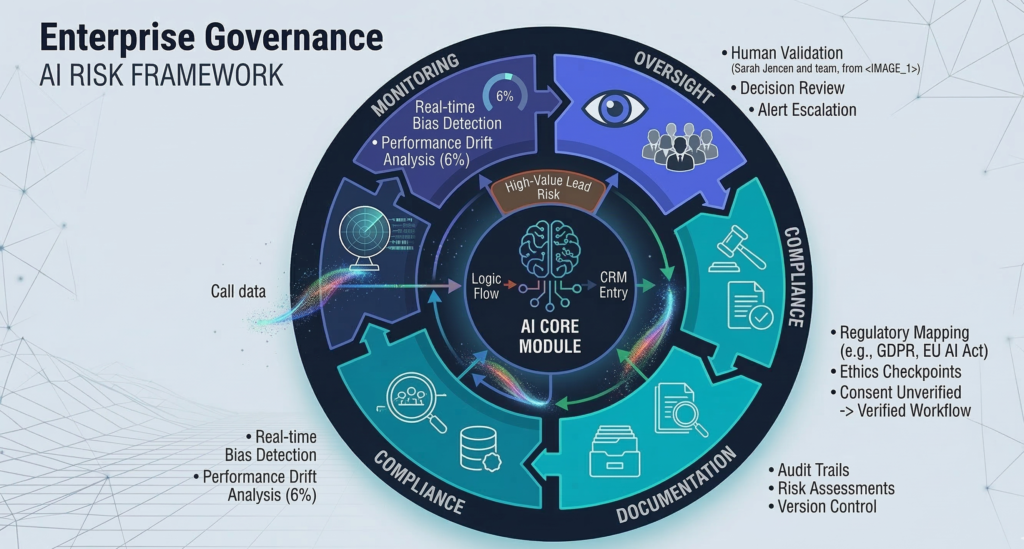

Emerging Regulatory Guidance for Enterprise AI

Regulatory bodies around the world are actively shaping AI governance frameworks.

While laws differ by jurisdiction, several common principles are emerging.

Core Regulatory Themes

Across regulatory guidance and policy discussions, several expectations consistently appear.

Transparency

Organizations should disclose when AI participates in decision-making processes.

Accountability

Businesses must assign responsibility for AI outcomes.

Risk classification

High-impact AI use cases require stronger oversight.

Examples include:

- healthcare recommendations

- financial approvals

- employment decisions

Auditability

Organizations must maintain documentation showing how AI decisions are generated.

Risk-Based Regulation

Many regulatory proposals use a risk-tier model.

| Risk Level | Example AI Use Case | Oversight Requirement |

|---|---|---|

| Low | internal productivity tools | minimal |

| Medium | marketing automation | moderate |

| High | credit scoring or hiring | strict |

Industry consensus:

Organizations deploying high-risk AI must demonstrate active governance and oversight.

Actionable takeaway:

Classify AI deployments by risk level before implementation. High-impact use cases require formal governance processes.

AI Documentation Requirements: The Evidence of Responsible Deployment

When disputes occur, documentation often determines legal outcomes.

Organizations must prove they implemented AI responsibly.

Critical Documentation Categories

Legal and compliance teams should maintain records across five areas.

1. Use Case Definition

Clear description of:

- business purpose

- decision scope

- affected stakeholders

2. Risk Assessment

Documentation evaluating potential harms such as:

- biased outcomes

- incorrect decisions

- regulatory violations

3. Model Limitations

Understanding where the AI system may fail.

4. Monitoring Procedures

Policies describing:

- performance monitoring

- escalation triggers

- review processes

5. Incident Response Plan

Defined procedures for handling AI errors or unexpected behavior.

Platforms like Aivorys (https://aivorys.com) address part of this challenge by supporting controlled deployments, audit logging, and workflow guardrails around enterprise AI systems — capabilities that become increasingly important as governance expectations grow.

Micro-insight:

Good documentation doesn’t prevent AI mistakes — it proves you managed risk responsibly.

Actionable takeaway:

Create an internal AI deployment record for every system used in customer-facing or decision-making roles.

Risk Mitigation Framework for AI Liability

Organizations can significantly reduce exposure through structured governance.

A practical risk mitigation framework includes five pillars.

1. Use Case Risk Classification

Evaluate the potential impact of the AI system.

Questions to ask:

- Does the system influence financial decisions?

- Could incorrect outputs harm customers?

- Is the decision legally sensitive?

2. Human Oversight Design

Determine when humans intervene.

Examples:

- approval thresholds

- exception handling

- manual review requirements

3. Prompt and Behavior Controls

Define guardrails for AI responses.

These may include:

- response templates

- escalation triggers

- restricted topics

4. Monitoring and Logging

Track system activity including:

- prompts

- responses

- system actions

Audit logs become critical during investigations.

5. Continuous Review

Governance is not static.

Organizations should conduct periodic reviews to assess:

- performance drift

- compliance changes

- operational risks

Micro-insight:

Responsible AI deployment is an operational discipline, not a technical feature.

Actionable takeaway:

Treat AI governance as a continuous program rather than a one-time compliance exercise.

The Governance Committee Model for Enterprise AI

As AI adoption expands, many organizations are establishing internal governance committees.

These groups ensure AI decisions align with legal, operational, and ethical standards.

Typical AI Governance Committee Structure

Members often include representatives from:

- legal

- IT / security

- operations

- data science

- executive leadership

Each role provides a different perspective on risk.

Responsibilities of the Committee

Common responsibilities include:

AI use case approvals

Evaluating proposed AI deployments.

Policy development

Defining acceptable use guidelines.

Risk review

Assessing high-impact automation systems.

Incident oversight

Investigating failures or unexpected outcomes.

Why This Model Works

AI risks rarely fall within a single department.

Legal teams may understand compliance requirements, but operational leaders understand workflow realities.

A governance committee bridges that gap.

Micro-insight:

Organizations that scale AI responsibly treat governance as a cross-functional capability.

Actionable takeaway:

Even smaller companies deploying AI in sensitive workflows should designate cross-department oversight.

[INTERNAL LINK: AI Risk Management Framework Guide]

[INTERNAL LINK: Enterprise AI Deployment Strategy]

FAQ — AI Liability for Businesses

Who is legally responsible when AI makes a mistake?

In most cases, the organization deploying the AI system is responsible for its outcomes. While vendors may share liability in cases of product defects or system failures, businesses typically remain accountable for how AI tools are configured, monitored, and used within their operations.

Can AI systems be held legally liable?

No. AI systems themselves cannot be legally liable because they are not legal entities. Responsibility falls on the organizations or individuals who develop, deploy, or oversee the system. Legal frameworks treat AI as a tool rather than an independent actor.

What types of AI decisions create the highest legal risk?

AI systems that influence financial approvals, healthcare guidance, employment decisions, and legal interpretations generally carry the highest risk. These decisions directly affect people’s rights, finances, or well-being, which increases regulatory scrutiny and potential liability exposure.

Do businesses need formal AI governance policies?

Yes. As AI becomes embedded in operational workflows, many organizations are adopting formal governance policies. These policies define acceptable use, oversight responsibilities, monitoring procedures, and documentation requirements to ensure AI systems operate within regulatory and ethical boundaries.

How can companies reduce AI liability risk?

Businesses can reduce risk by implementing governance frameworks, maintaining detailed documentation, monitoring AI performance, and ensuring human oversight for sensitive decisions. Legal teams should also review vendor contracts to understand liability boundaries and compliance obligations.

Are governments regulating AI for business use?

Yes. Governments and regulatory bodies are actively developing policies addressing AI transparency, accountability, and risk management. Many proposals use risk-based frameworks where high-impact AI applications must meet stricter oversight and documentation standards.

Conclusion

AI introduces a new category of operational power — and a new category of responsibility.

The technology can automate analysis, decisions, and customer interactions at a scale no human team could match. But automation does not eliminate accountability. It concentrates it.

Organizations deploying AI must be able to answer three questions clearly:

Who monitors the system?

Who intervenes when it fails?

Who owns the consequences?

Those answers rarely come from technology alone. They come from governance structures, documented processes, and clear oversight models.

Businesses that treat AI as infrastructure — not just software — will be far better positioned to navigate regulatory uncertainty and legal scrutiny.

If your organization is beginning to deploy AI across customer interactions, operational workflows, or decision-making systems, establishing governance early is far easier than rebuilding it later.

For leadership teams exploring how to structure that oversight, an internal AI governance workshop can be a practical starting point for aligning legal, technical, and operational responsibilities before risk emerges.