AI systems appear deceptively simple from the user interface: type a prompt, receive a response. But beneath that interaction sits a complex telemetry layer recording prompts, responses, metadata, and operational signals.

For many organizations, that hidden infrastructure introduces serious AI data logging risks.

Prompts may contain internal documents. Responses may reference proprietary information. API requests often include identifiers, timestamps, and behavioral signals. And in many AI platforms, those records are automatically stored in logs that engineering teams rarely audit.

The result is a quiet accumulation of sensitive information across logging pipelines, monitoring dashboards, and third-party analytics tools.

The risk rarely appears during the pilot phase. It emerges months later—during a compliance review, internal security audit, or breach investigation—when teams discover that their AI assistant has been recording far more operational data than expected.

This article examines the most common categories of AI logging exposure, why they often go unnoticed, and how technical leaders can implement controlled audit trails without sacrificing observability.

The AI Logging Layer Most Teams Forget Exists

Most AI discussions focus on models, prompts, and outputs. Very few address the logging layer that surrounds them.

Yet modern AI systems generate multiple classes of logs automatically:

- Prompt input logs

- Model response logs

- API request metadata

- System telemetry and error logs

- User interaction traces

- Debugging and monitoring records

Each of these can contain sensitive information.

Prompt and Response Logging

Many AI platforms record prompts and responses to support:

- debugging

- performance monitoring

- abuse detection

- model improvement

That means the following may be stored automatically:

- customer messages

- internal documentation excerpts

- legal text

- financial data

- healthcare information

Even if the AI system itself is secure, logged prompts may create an entirely separate data exposure surface.

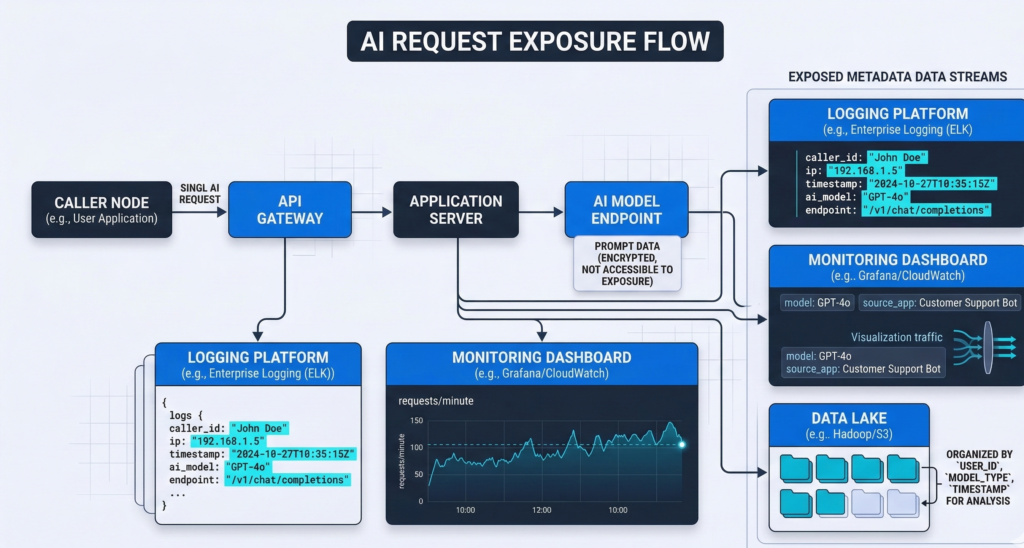

Observability Platforms Multiply the Exposure

Logs rarely stay in one place.

Engineering teams commonly route them through:

- centralized logging tools

- monitoring dashboards

- data lakes

- analytics platforms

Each additional system increases the attack surface.

The AI model may be secure—but its logs may exist across half a dozen systems.

Takeaway:

Before approving enterprise AI deployments, CTOs should audit not only the model provider but also the entire logging and monitoring pipeline surrounding it.

API Request Metadata: The Silent Data Leak

One of the least understood sources of AI data logging risks is API metadata.

Even when prompt content is removed, API requests often log contextual data such as:

- user identifiers

- timestamps

- IP addresses

- session IDs

- product identifiers

- system routing data

Individually these fields seem harmless. Combined, they create a detailed behavioral record.

Why Metadata Matters

Metadata can reveal:

- which customers contacted support

- when employees accessed internal documents

- which products triggered AI interactions

- how frequently specific users interacted with the system

This information can be valuable for monitoring—but it also creates compliance exposure.

In regulated industries, metadata can qualify as sensitive operational data.

A Common Scenario

Consider a healthcare scheduling assistant:

Even if patient names are removed, logs might record:

- appointment times

- doctor identifiers

- clinic locations

- patient account numbers

Those fields may still fall under regulatory data protection frameworks.

Takeaway:

Enterprise AI monitoring should treat metadata as sensitive operational data, not harmless system noise.

The Retraining Trap: When Logs Become Training Data

Many organizations assume logs are temporary records. In practice, they often become something else: training datasets.

AI vendors frequently collect prompt and response logs to improve model performance. This process can create unexpected exposure.

The Mechanism

Typical workflow:

- User submits prompt

- System logs prompt and response

- Logs are stored in internal datasets

- Samples may be used for evaluation or model improvement

If those logs contain proprietary content, they may enter training pipelines.

Why This Creates Risk

Several concerns emerge:

- proprietary knowledge may leave company control

- sensitive data may be replicated across training datasets

- deletion becomes difficult once datasets are distributed

For enterprises, this raises governance questions about data residency, retention, and model ownership.

Platforms built for enterprise deployments increasingly separate operational logging from model improvement pipelines to prevent this risk. Platforms like Aivorys (https://aivorys.com) are built for this exact use case — private AI with controlled data handling, voice automation, and CRM-connected workflows where operational data remains inside the organization’s controlled environment.

Takeaway:

Always verify whether your AI vendor uses prompt logs for training—and whether opt-out controls actually isolate your data.

Third-Party Logging Tools Multiply Exposure

Logging rarely happens inside the AI platform alone.

Most engineering stacks send logs to external infrastructure such as:

- observability platforms

- cloud logging services

- monitoring dashboards

- developer debugging tools

These integrations are useful—but they create additional data flows.

A Typical Enterprise Logging Pipeline

A single AI interaction may produce logs that travel through:

- API gateway

- application server

- monitoring tool

- centralized log storage

- developer analytics platform

Each stage may store copies of the data.

The Hidden Problem

Security reviews often focus on the AI vendor.

But third-party logging tools may store more data than the AI provider itself.

These tools may also retain logs for months or years depending on configuration.

Takeaway:

AI risk reviews must map the entire log lifecycle—not just the AI system.

The AI Logging Audit Checklist CTOs Should Run

Most organizations have never performed a structured AI logging audit.

A simple framework can quickly identify exposure risks.

AI Logging Risk Assessment Checklist

Evaluate your AI system across five categories.

1. Prompt Logging

- Are prompts stored automatically?

- Are sensitive inputs redacted?

- What retention period applies?

2. Response Storage

- Are AI outputs logged?

- Can outputs contain internal knowledge base data?

- Are logs encrypted?

3. Metadata Collection

- Which request fields are logged?

- Are user identifiers stored?

- Are IP addresses retained?

4. Third-Party Log Routing

- Where are logs exported?

- Which vendors store them?

- How long are they retained?

5. Model Training Exposure

- Are logs used to improve the model?

- Can training be disabled?

- Are datasets isolated per customer?

Score each category:

| Risk Level | Criteria |

|---|---|

| Low | Minimal logging, strict retention, isolated datasets |

| Medium | Logs retained but controlled and audited |

| High | Prompt logging + third-party storage + unclear retention |

Takeaway:

Most enterprises discover their highest exposure risk not in the AI model itself—but in the surrounding logging ecosystem.

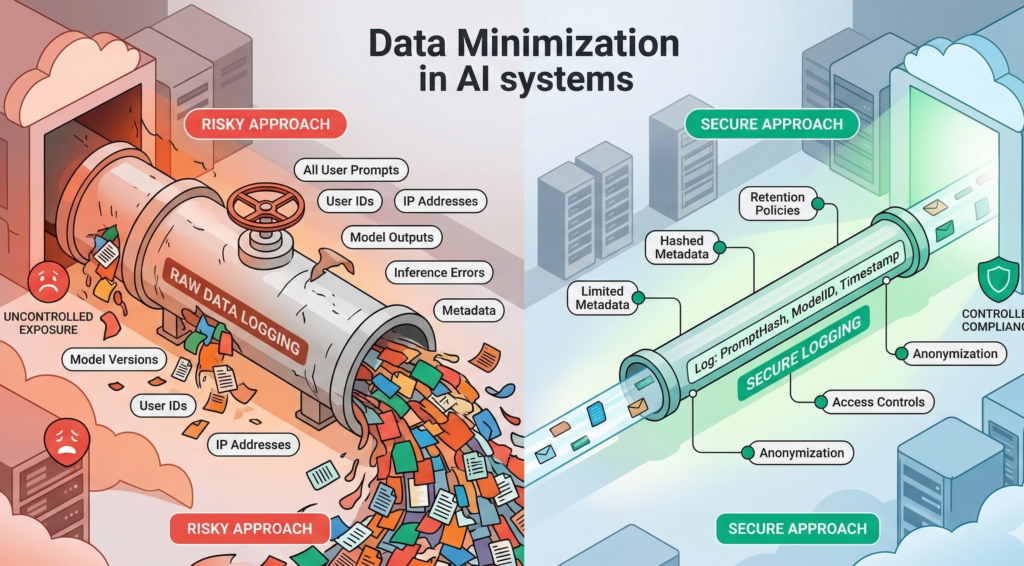

Designing Controlled AI Audit Trails

AI systems still require logging. Without it, teams lose visibility into performance, errors, and misuse.

The goal is not eliminating logs—it’s controlling them.

Principles of Secure AI Audit Logging

1. Data Minimization

Log only what is necessary for system monitoring.

Remove:

- full prompts when possible

- personally identifiable data

- internal document excerpts

2. Structured Logging Policies

Define explicit rules:

- what is logged

- who can access it

- how long it is retained

3. Segregated Storage

Separate:

- operational telemetry

- security audit logs

- model evaluation datasets

This prevents cross-contamination.

4. Encryption and Access Controls

Logs should be protected like any sensitive dataset.

Use:

- encryption at rest

- role-based access control

- audit access monitoring

Takeaway:

AI logging must be treated as a security system—not merely a developer convenience.

Data Minimization: The Most Effective Risk Reduction Strategy

The most reliable way to reduce AI data logging risks is straightforward:

collect less data.

This principle appears consistently in security frameworks and regulatory guidance.

Practical Implementation Methods

Prompt Redaction

Automatically remove:

- names

- account numbers

- document IDs

before logs are stored.

Tokenized Identifiers

Instead of storing user data directly, store anonymized tokens that map to internal records.

Log Sampling

Not every interaction needs to be recorded. Sampling reduces storage exposure while preserving observability.

Short Retention Windows

Many AI logs do not require long-term storage.

Retention policies of:

- 7 days

- 30 days

- 90 days

dramatically reduce breach exposure.

Takeaway:

Reducing log volume is often more effective than trying to secure massive datasets after they already exist.

The Future of Enterprise AI Monitoring

The first wave of AI adoption focused on capability.

The next wave will focus on governance.

As AI becomes embedded in customer support, internal knowledge systems, and operational workflows, logging architecture becomes just as important as model performance.

Forward-thinking organizations are beginning to treat AI observability as a security discipline rather than a debugging tool.

That means designing systems where:

- prompts are controlled

- metadata is minimized

- logs are segmented

- audit trails are intentional

The organizations that adopt AI responsibly will not be those with the most powerful models.

They will be the ones who understand exactly what their AI systems remember—and what they don’t.

If your organization has already deployed AI assistants or automation systems, the most valuable next step is not another model upgrade.

It is a structured AI data audit review that maps every place your system stores operational data.

Because the real exposure risk rarely lives inside the AI model.

It lives in the logs.

FAQ — AI Data Logging Risks

What are AI data logging risks?

AI data logging risks occur when AI systems store prompts, responses, or metadata that contain sensitive information. These logs may include internal documents, customer interactions, or operational identifiers. If not properly controlled, they can create exposure through monitoring tools, third-party services, or long-term storage.

Do AI systems automatically log prompts?

Many AI platforms log prompts by default to support debugging, abuse monitoring, and system optimization. These logs may include the full text submitted by users. Organizations deploying AI should review logging settings and determine whether prompt storage can be limited, anonymized, or disabled.

Why is AI metadata considered sensitive?

Metadata such as IP addresses, timestamps, session IDs, and user identifiers can reveal behavioral patterns and operational activity. Even without the prompt content, metadata can expose which users interacted with AI systems, when interactions occurred, and which services were accessed.

Can AI logs be used for model training?

Some AI providers use prompt and response logs to improve model performance. This process may include storing and analyzing interactions. Organizations concerned about data privacy should verify whether their vendor isolates customer data and allows training opt-outs.

How long should AI logs be retained?

Retention policies depend on operational needs, but shorter windows reduce risk. Many enterprises use retention periods between 7 and 90 days for AI telemetry logs. Security audit logs may require longer storage depending on compliance requirements.

What is the safest way to monitor AI systems?

The safest approach combines minimal logging, strict access controls, and segmented storage. Organizations should separate operational telemetry from user prompts, encrypt log storage, and regularly audit which systems receive AI interaction data.