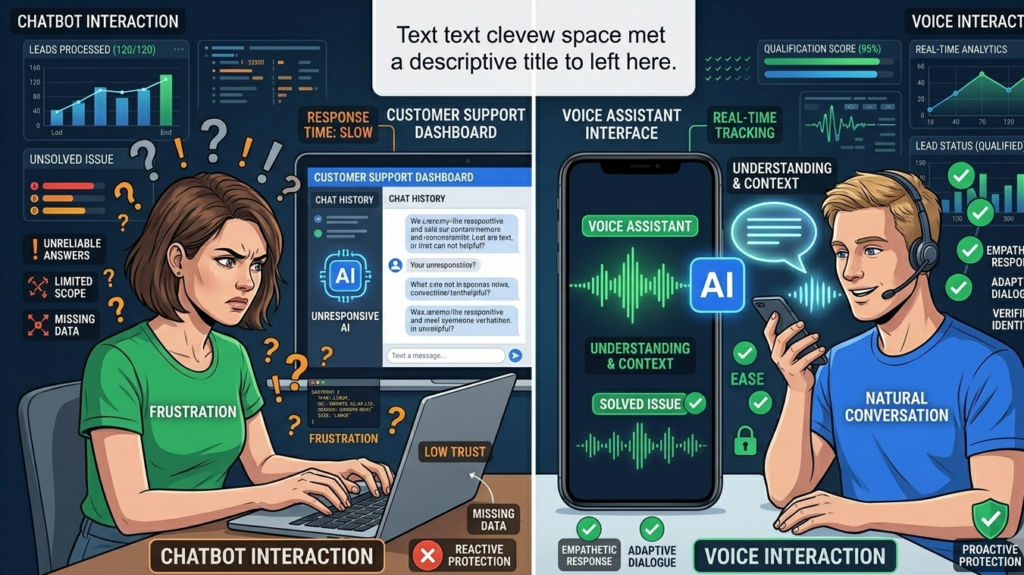

A frustrated customer opens a support chat.

They type a question.

The chatbot replies instantly — but the answer feels scripted, mechanical, and detached from the situation. The customer rephrases the question. The bot responds again with something slightly off.

Within seconds, the interaction shifts from assistance to friction.

Now imagine the same customer calling a voice assistant instead.

They ask the same question. The assistant responds with natural pacing, conversational tone, and the subtle rhythm of human dialogue.

The experience feels different.

The answer may contain the same information, but the interaction feels more trustworthy.

This contrast explains why customers trust voice AI more than chatbots across many service environments. It is not only about accuracy or speed. The difference emerges from how humans process conversation psychologically.

Voice carries emotional signals, conversational cues, and timing patterns that text interfaces cannot replicate.

For customer experience leaders, this insight matters.

Understanding the psychology of conversational interaction can determine whether automation improves service quality — or quietly erodes customer trust.

The shift toward voice-based AI systems reflects a deeper principle: people evaluate technology using the same instincts they use to judge other humans.

That instinct changes everything about how conversational systems should be designed.

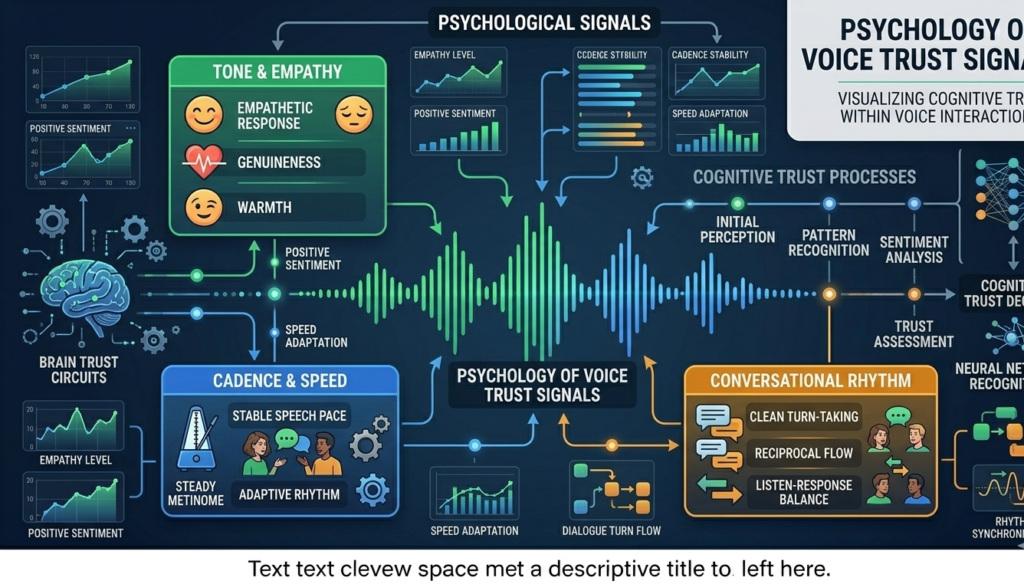

Cognitive Trust Signals Hidden Inside Voice Conversations

Humans evolved to evaluate trust primarily through speech.

Long before written communication existed, voice tone, cadence, and conversational rhythm served as signals of credibility and intent.

Those instincts remain deeply embedded in human cognition.

When customers interact with voice AI, their brains automatically evaluate several trust signals.

Vocal Cadence and Natural Timing

Human conversation follows predictable timing patterns.

Pauses between responses signal thoughtfulness. Slight variations in speech pace indicate attentiveness. Natural transitions between phrases create conversational flow.

Text-based chatbots lack these cues.

Voice interfaces, however, can replicate them.

Even subtle pauses can make responses feel more deliberate and authentic.

Micro-insight:

Trust often depends less on what is said than on how naturally it is delivered.

Prosody and Emotional Interpretation

Prosody refers to the rhythm, pitch, and emphasis within speech.

It conveys emotional context that text cannot easily reproduce.

For example:

- rising pitch can indicate engagement

- slower pacing suggests careful explanation

- tonal variation signals empathy

Voice AI systems designed with conversational prosody feel less robotic because they mimic human communication patterns.

Conversational Turn-Taking

Human dialogue follows a pattern of turn-taking.

People expect slight overlaps, acknowledgments, and verbal confirmations.

Voice interfaces can simulate these conversational mechanics through phrases like:

- “Let me check that for you.”

- “One moment while I look that up.”

These conversational markers create the illusion of attentive listening.

Practical takeaway:

Designing voice AI requires attention to conversational rhythm and pacing — not just language accuracy.

Emotional Response: Why Tone Creates Trust Faster Than Text

Trust in customer interactions is rarely logical.

It is emotional first.

Psychological research consistently shows that tone of voice carries emotional weight that written words cannot fully replicate.

This effect is particularly visible in customer service interactions.

Voice Reduces Ambiguity

Text messages can easily be misinterpreted.

A short chatbot response may appear dismissive, even if the intent was neutral.

Voice eliminates much of this ambiguity.

Tone clarifies intent.

Humans Associate Voice With Presence

A voice implies presence — even when the interaction is automated.

Customers perceive spoken responses as closer to human assistance than written text.

This perception triggers social behaviors normally reserved for interpersonal communication.

Emotional Regulation During Support Calls

Customers often contact support when they are already frustrated.

Voice interaction can calm situations more effectively than chat interfaces because tone signals empathy.

Even simple phrases spoken naturally can diffuse tension.

Micro-insight:

Voice creates emotional context that text cannot replicate — and emotional context drives trust.

Practical takeaway:

Organizations designing automated service experiences should consider voice interaction for moments when customer emotions run high.

Chatbot Fatigue: The Hidden Cost of Text-Based Automation

Many organizations implemented chatbots expecting faster support and reduced service costs.

The reality often looks different.

Customers frequently abandon chatbot interactions before resolution.

This phenomenon is increasingly known as chatbot fatigue.

Repetitive Interaction Loops

Chatbots often require customers to reformulate questions multiple times.

The experience feels transactional rather than conversational.

Customers quickly lose patience.

Cognitive Load

Text conversations require more effort from users.

They must:

- type responses

- interpret written messages

- manage conversation structure

Voice removes much of this cognitive burden.

Speaking is faster and more natural than typing.

Perception of Scripted Responses

Customers recognize scripted chatbot replies quickly.

Even when responses are accurate, the interaction feels artificial.

Voice-based responses can mask some of that rigidity because natural delivery softens structured language.

Practical takeaway:

Chatbots work well for simple transactional tasks, but voice interfaces often outperform them when conversations require nuance or emotional sensitivity.

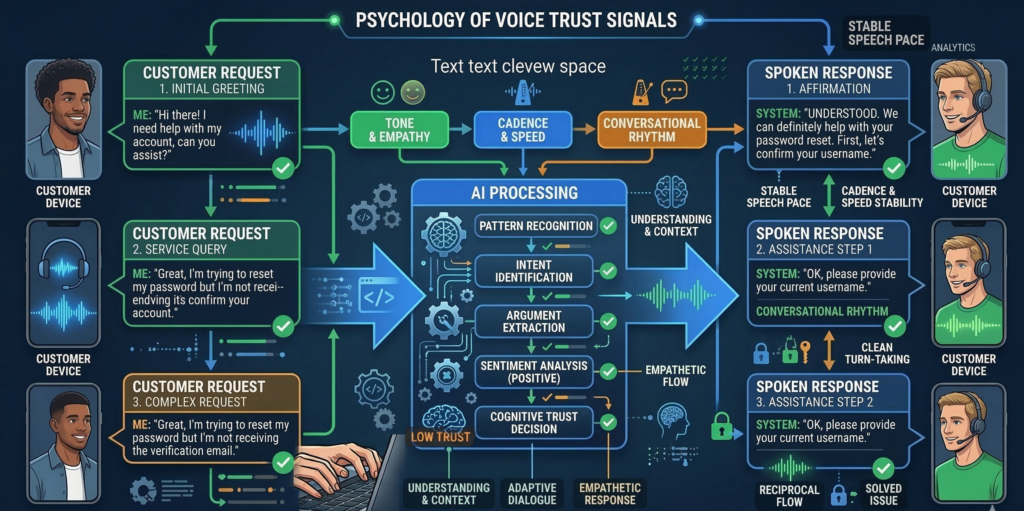

Real-Time Responsiveness and Conversational Flow

Another reason customers trust voice AI more than chatbots lies in the speed of conversational exchange.

Voice interactions operate at the pace of natural conversation.

Text conversations do not.

Response Latency

In chat interfaces, response delays feel awkward.

Customers stare at typing indicators or wait for message generation.

In voice conversations, slight pauses feel natural.

Humans expect short thinking delays during speech.

Continuous Interaction

Voice conversations allow continuous dialogue.

Customers can interrupt, clarify, or elaborate naturally.

Chatbots struggle with these conversational dynamics.

Faster Problem Resolution

Voice interactions compress multiple steps into a single exchange.

Instead of typing several messages, customers can explain the entire problem verbally.

The system can extract intent and respond immediately.

Micro-insight:

Speed alone does not build trust — natural conversational flow does.

Practical takeaway:

When designing AI-powered support systems, prioritize conversational continuity over raw response speed.

Designing Voice UX That Feels Authentic

Voice AI that feels unnatural erodes trust quickly.

The difference between helpful and frustrating voice systems often comes down to conversation design.

Principles of Natural Voice UX

Effective voice interactions share several characteristics:

- Context awareness – remembering earlier parts of the conversation

- Conversational brevity – responses that are informative but concise

- Natural acknowledgments – phrases that confirm understanding

- Escalation paths – seamless handoff to human agents when needed

Avoiding Robotic Interaction Patterns

Poorly designed voice systems often repeat rigid phrases.

For example:

“Your request has been received.”

Human conversations rarely sound like this.

Better phrasing might be:

“I’ve got that — checking your account now.”

Small changes dramatically improve perceived authenticity.

Integrating Voice AI Into Operational Systems

Voice assistants become far more useful when connected to internal tools such as:

- CRM platforms

- scheduling systems

- support ticketing platforms

Systems designed for operational integration allow voice assistants to resolve problems rather than merely answer questions.

Platforms like Aivorys (https://aivorys.com) are built for this use case — enabling private AI assistants trained on internal knowledge bases while integrating with operational systems so voice interactions can execute real workflows rather than isolated responses.

Practical takeaway:

Voice AI should function as an operational interface — not just a conversational layer.

Behavioral Data: What Voice Interactions Reveal About Customers

Voice interactions generate a category of behavioral insight that text interfaces rarely capture.

This insight can significantly improve customer experience strategies.

Conversational Analytics

Voice AI systems can analyze conversational patterns such as:

- sentiment changes during conversations

- common points of confusion

- frequently repeated questions

These signals reveal where service processes break down.

Emotional Trend Detection

Tone analysis can identify frustration, urgency, or confusion.

This allows systems to escalate conversations to human agents when needed.

Customer Intent Patterns

Voice conversations often reveal customer intent more clearly than text.

People naturally explain context when speaking.

This richer data helps organizations refine service workflows.

Practical takeaway:

Voice AI does not only resolve customer issues — it provides behavioral insight that can improve the entire customer experience.

[INTERNAL LINK: conversational AI customer service strategy]

[INTERNAL LINK: designing AI voice assistants for support teams]

Framework: Designing Trustworthy Voice AI Experiences

Customer experience leaders implementing voice AI can evaluate their systems using the VOICE Trust Framework.

V.O.I.C.E Trust Framework

V — Vocal Authenticity

Does the AI speak with natural cadence, tone variation, and conversational pacing?

O — Operational Integration

Can the assistant access real systems like CRM, scheduling tools, or support databases?

I — Intent Recognition

Does the system understand complex customer requests without repeated clarification?

C — Conversational Flow

Are conversations continuous and natural, or do they feel rigid and scripted?

E — Escalation Safety

Can the system transfer complex or emotional interactions to human agents quickly?

Organizations that score high across these five dimensions consistently see stronger customer trust in automated voice interactions.

Practical takeaway:

Trust in voice AI depends less on intelligence and more on conversational authenticity and operational capability.

Frequently Asked Questions

Why do customers trust voice AI more than chatbots?

Customers trust voice AI more than chatbots because voice interactions replicate natural human conversation. Tone, pacing, and conversational rhythm create emotional cues that signal attentiveness and empathy. These cues reduce ambiguity and make automated interactions feel more authentic than text-based chat responses.

Is voice AI more effective for customer service than chatbots?

Voice AI often performs better in situations involving complex issues or emotional customers. Speaking allows customers to explain problems faster and more naturally than typing. Voice systems also provide tone and pacing cues that help build trust during support interactions.

What is chatbot fatigue?

Chatbot fatigue refers to customer frustration caused by repetitive chatbot interactions. It typically occurs when bots require customers to repeat questions, navigate rigid conversation paths, or interpret scripted responses. Over time, this experience reduces trust in automated support systems.

How does voice UX affect customer trust?

Voice UX influences trust through conversational design elements such as tone, cadence, pacing, and acknowledgment phrases. When voice systems mirror human conversational patterns, customers perceive them as more attentive and helpful, even when the responses are automated.

Can voice AI understand emotional customer behavior?

Many voice AI systems analyze conversational signals such as tone changes, speaking pace, and word choice. These signals help detect frustration or urgency, allowing systems to escalate interactions or adjust responses to improve the customer experience.

Conclusion

Trust in customer service rarely depends on technology alone.

It depends on how the interaction feels.

Voice interfaces succeed where many chatbots struggle because they align with the way humans evolved to communicate. Tone, rhythm, and conversational pacing create signals of attentiveness that text interfaces cannot replicate.

For customer experience leaders, this insight reframes how automation should be deployed.

The goal is not simply replacing human agents with AI.

It is designing automated systems that behave like capable conversational partners — responsive, natural, and connected to the operational tools required to resolve real problems.

Organizations that understand this distinction will build automated service experiences customers actually trust.

Those that ignore it will continue wondering why their chatbots feel efficient internally yet frustrating to the people they were supposed to help.

If you’re exploring how conversational AI could reshape your customer experience strategy, a deeper evaluation of voice-driven service design is often the most revealing next step.