Most AI failures in business environments don’t originate in the model.

They originate in compliance architecture.

An organization deploys a generative AI tool to summarize customer tickets, automate intake forms, or assist with internal decision-making. It works well. Productivity increases.

But months later, the compliance team discovers something unsettling.

Customer data is being sent to external inference APIs.

Sensitive prompts are logged in vendor analytics systems.

There’s no clear audit trail showing who asked the AI what, when, and why.

Suddenly, the conversation shifts from innovation to liability.

AI compliance risk rarely appears during the pilot phase. It appears during the audit.

Regulatory frameworks such as GDPR, HIPAA, and SOC 2 were not written for generative AI systems — yet organizations must still demonstrate data control, auditability, and responsible processing when AI becomes part of their operational stack.

The uncomfortable truth: most AI deployments treat compliance as a post-implementation concern.

By the time governance controls are added, the architecture already exposes risk.

This article breaks down where AI compliance failures originate, why common deployments violate regulatory expectations, and how to design compliance-first AI systems from day one.

Where AI Compliance Failures Actually Begin

When compliance issues emerge in AI deployments, leadership often assumes the technology failed.

In reality, the root cause is usually architectural.

Most organizations introduce AI in three predictable stages:

- Experimentation with public models

- Limited workflow integrations

- Organization-wide adoption

The compliance problems appear during stage three.

The “Shadow AI” Phase

During experimentation, teams begin using AI tools independently:

- marketing generating content

- support teams summarizing tickets

- developers using AI for code assistance

- sales teams drafting outreach

These tools are often accessed through browser interfaces or APIs outside the organization’s governance framework.

No central approval.

No security review.

No compliance documentation.

This phenomenon is sometimes called shadow AI.

It mirrors the early days of shadow IT — except the risk profile is higher because AI processes sensitive information directly through prompts.

Why Compliance Teams Discover Problems Late

Compliance officers typically audit:

- data storage systems

- databases

- customer platforms

- cloud infrastructure

AI prompts, however, behave differently.

They can contain customer data, personal identifiers, health information, legal documents, or financial analysis — all embedded inside natural language requests.

If prompt flows aren’t mapped early, they become invisible compliance exposure points.

Key insight:

AI compliance risk emerges when AI is treated as a tool instead of a governed system.

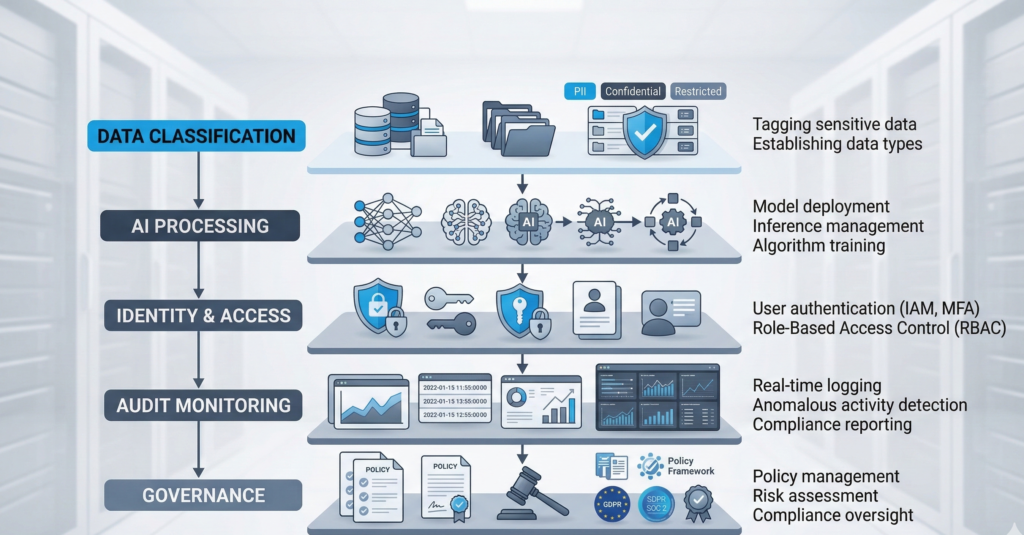

AI Data Mapping and Classification Pitfalls

Every compliance framework ultimately comes down to one core question:

Where does sensitive data go?

In traditional software architecture, that question is easier to answer because data moves through defined pipelines.

AI changes the dynamic.

The Prompt as a Data Container

Prompts can contain:

- customer names

- transaction records

- medical notes

- internal documents

- support conversations

- legal analysis

This means a single prompt can unintentionally contain multiple regulated data types simultaneously.

For example:

A healthcare intake assistant might send prompts containing:

- patient identifiers

- appointment history

- medical symptoms

If that prompt travels to an external AI provider without a HIPAA-compliant processing agreement, the organization may already be out of compliance.

The Classification Failure

Most companies maintain data classification policies such as:

- Public

- Internal

- Confidential

- Regulated

But AI prompts rarely pass through classification filters.

Employees paste information directly into AI tools.

No automatic tagging.

No policy enforcement.

What Mature Organizations Do Instead

Organizations that successfully manage AI compliance risk implement prompt-aware data governance.

Typical controls include:

- prompt filtering layers before AI requests are sent

- automated PII detection systems

- tokenization or redaction pipelines

- separate AI environments for regulated workloads

Actionable takeaway:

Before deploying AI broadly, map every data category that could appear in prompts — not just structured datasets.

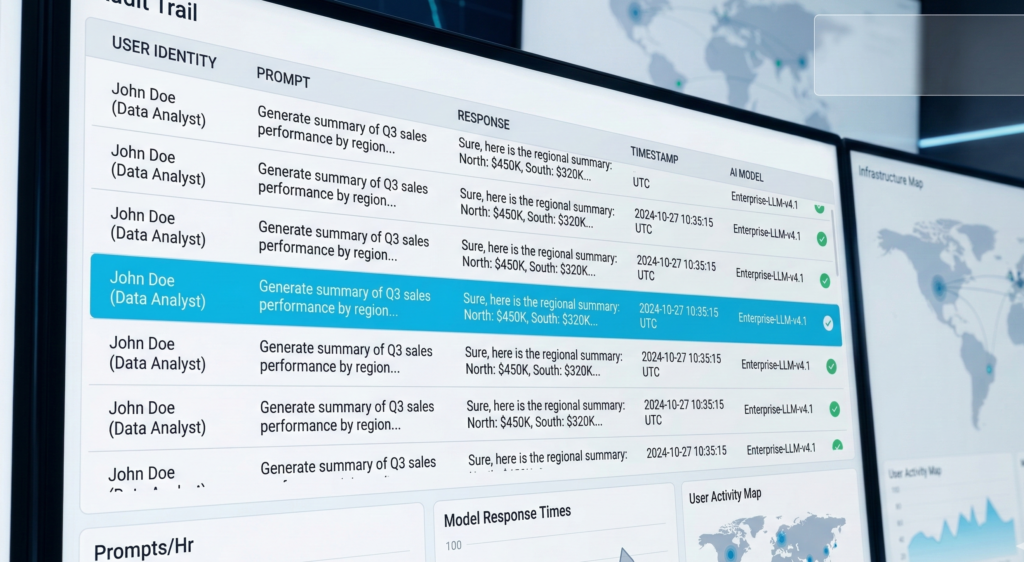

Why AI Systems Require Full Audit Trails

Traditional software logs transactions.

AI systems must log decisions and reasoning inputs.

This distinction matters for compliance.

What Regulators Expect

Across frameworks like GDPR, HIPAA, and SOC 2, regulatory guidance increasingly emphasizes traceability.

Organizations must demonstrate:

- who accessed sensitive information

- when the interaction occurred

- what system processed it

- what action resulted

With AI systems, that means capturing:

- prompts submitted by users

- model responses

- system actions triggered by responses

- user identity and role

The Audit Gap Most Companies Miss

Many AI integrations capture only API request logs.

That is insufficient.

A proper AI audit trail should include:

User Context

- employee identity

- role or department

- session timestamp

Prompt Context

- full prompt text

- referenced internal data sources

Model Output

- response text

- automated actions triggered

System Actions

- database updates

- emails sent

- workflow changes

Without these logs, organizations cannot reconstruct how an AI-driven decision occurred.

In regulated industries, that becomes a major governance gap.

Key insight:

If you cannot reconstruct the AI decision process, auditors will treat the system as uncontrolled automation.

Vendor Risk Management in AI Stacks

AI systems rarely exist in isolation.

They typically involve multiple vendors:

- model providers

- API orchestration layers

- vector databases

- analytics tools

- communication platforms

Each layer introduces potential compliance exposure.

The Vendor Stack Problem

A typical AI workflow might look like this:

- CRM sends customer data to an AI workflow

- Workflow platform sends prompts to an LLM provider

- Response is stored in a database

- Automation platform triggers emails or actions

Each vendor may process sensitive data.

But most organizations only evaluate one of them — the model provider.

Vendor Due Diligence Questions

Compliance teams should ask:

- Where is AI inference processed geographically?

- Are prompts stored or retained?

- What logging systems exist?

- Do subcontractors process requests?

- Are SOC 2 or equivalent controls verified?

Industry consensus among compliance practitioners suggests that AI vendor risk reviews must extend to the entire orchestration stack, not just the model.

Where Governance Starts to Matter

Organizations deploying private AI environments gain control over:

- prompt storage

- inference infrastructure

- logging policies

- data retention rules

Platforms like Aivorys (https://aivorys.com) are designed for this governance layer — private AI environments with controlled data handling, voice automation, and CRM-connected workflows that keep sensitive operational data inside governed infrastructure.

Actionable takeaway:

Treat AI deployments like supply chains — every vendor in the pipeline must pass compliance scrutiny.

The AI Governance Gap in Most Organizations

Many companies assume governance begins after deployment.

That assumption creates risk.

Governance Should Exist Before the First Prompt

A compliance-ready AI program typically defines:

Policy

- acceptable AI use cases

- prohibited data types

- approval requirements

Architecture

- private vs public AI deployment rules

- approved integrations

- prompt filtering layers

Monitoring

- audit logs

- anomaly detection

- compliance reporting

The Cultural Challenge

Technology controls alone aren’t enough.

Organizations must train employees to understand:

- what data cannot be entered into AI systems

- how AI responses should be verified

- when human oversight is required

This is similar to security awareness training — except the focus is data exposure through prompts.

Key insight:

AI governance is not a document. It is a system of architecture, monitoring, and policy enforcement.

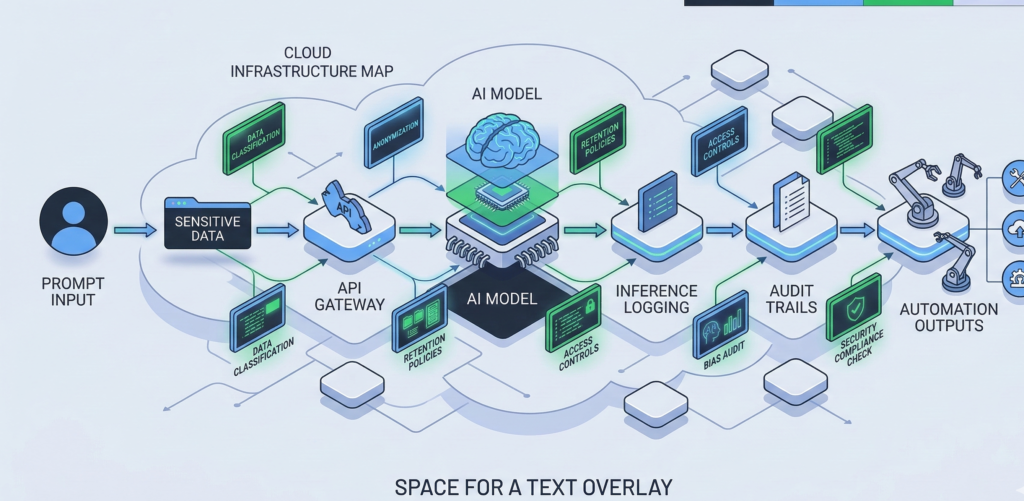

The Compliance-First AI Architecture Framework

Organizations deploying AI responsibly design governance into the architecture itself.

The following framework is used by many enterprise security teams evaluating AI systems.

The 5-Layer Compliance AI Framework

1. Data Classification Layer

Define what information AI systems can access.

Controls include:

- automated PII detection

- tokenization pipelines

- prompt filtering systems

2. AI Processing Layer

Determine where inference occurs:

- private infrastructure

- controlled cloud environments

- approved vendors

Sensitive workloads should never default to public inference endpoints.

3. Identity and Access Layer

AI access must follow the same controls as other enterprise systems:

- role-based access

- SSO authentication

- access logging

4. Audit and Monitoring Layer

Capture complete interaction records:

- prompts

- outputs

- system actions

- user identities

5. Governance and Policy Layer

Define rules governing AI behavior:

- escalation protocols

- human-review thresholds

- acceptable data usage

Quick Compliance Readiness Checklist

Use this 10-point rubric to evaluate your AI environment.

Score 1 point for each “Yes.”

- ☐ Prompt data is classified before AI processing

- ☐ Sensitive data is automatically redacted or tokenized

- ☐ AI prompts and outputs are logged with user identity

- ☐ Model responses triggering actions are auditable

- ☐ AI infrastructure location is documented

- ☐ All AI vendors passed a security review

- ☐ AI data retention policies are defined

- ☐ AI systems integrate with identity management

- ☐ Compliance team approved AI use cases

- ☐ Incident response procedures include AI systems

Score Interpretation

8–10 → Compliance-ready architecture

5–7 → Moderate risk

0–4 → High compliance exposure

Actionable takeaway:

Compliance readiness depends far more on architecture discipline than model selection.

Why Compliance-First AI Architecture Is Becoming Mandatory

The regulatory landscape around AI is evolving rapidly.

European regulators are already expanding guidance on automated decision systems, while healthcare and financial regulators increasingly expect explainability and auditability for AI-assisted processes.

Industry experts anticipate several trends:

- mandatory documentation of AI decision systems

- expanded audit requirements for AI workflows

- stronger vendor accountability rules

Organizations that treat AI governance as an afterthought will face painful retrofits later.

Those that design compliance-first architecture from the start will scale AI with far less operational friction.

Key insight:

AI regulation will not eliminate innovation — it will eliminate uncontrolled deployments.

FAQ

What is AI compliance risk?

AI compliance risk refers to the possibility that an AI system violates regulatory requirements related to data privacy, security, or governance. This can occur when sensitive data is processed without proper controls, audit trails are missing, or AI vendors fail to meet regulatory obligations under frameworks like GDPR, HIPAA, or SOC 2.

Why do AI deployments create GDPR compliance challenges?

AI systems often process natural language prompts that contain personal data. If those prompts are sent to external AI providers without proper data processing agreements, geographic data controls, or retention policies, organizations may violate GDPR requirements related to data residency, transparency, and lawful processing.

Are AI systems allowed under HIPAA?

AI systems can be used in healthcare environments, but they must meet strict HIPAA safeguards. This includes secure infrastructure, encrypted data handling, access controls, and formal agreements with vendors that process protected health information (PHI). Without these safeguards, AI systems could expose patient data.

What SOC 2 controls apply to AI systems?

SOC 2 evaluations typically examine controls around security, availability, confidentiality, processing integrity, and privacy. For AI systems, this means maintaining strong access controls, secure data handling, detailed logging, and documented governance processes to ensure AI interactions are auditable and controlled.

How can companies reduce AI compliance risk?

Organizations reduce AI compliance risk by implementing governance from the start. This includes mapping prompt data flows, deploying AI in controlled environments, logging AI interactions, reviewing vendors for compliance, and establishing clear policies for acceptable AI usage across departments.

Do compliance teams need to review AI vendors?

Yes. AI deployments often rely on multiple vendors including model providers, automation platforms, and communication tools. Each vendor may process sensitive information, so compliance teams must evaluate their security practices, data handling policies, and regulatory certifications before integration.

Conclusion

The greatest risk in enterprise AI adoption isn’t model accuracy.

It’s governance blind spots.

AI systems process information differently than traditional software. They transform unstructured prompts into decisions, actions, and automated workflows — often across multiple vendors and infrastructure layers.

Without intentional compliance architecture, those interactions create invisible risk.

Organizations that succeed with AI treat it like critical infrastructure, not an experimental tool.

They design systems where data classification, audit logging, vendor governance, and policy enforcement exist before the first deployment.

As regulators expand oversight of automated decision systems, this distinction will become increasingly important.

AI innovation will continue to accelerate.

But the organizations that scale it safely will be the ones that built compliance into the architecture from the very beginning.

If your organization is exploring AI deployments, a structured compliance readiness audit is often the fastest way to identify governance gaps before they become regulatory problems.